Metadata

NameSpace: Camera

Enums

| Name | |

|---|---|

| enum | Tags ** { ACAMERA_COLOR_CORRECTION_MODE = |

acamera_metadata_section_start.ACAMERA_COLOR_CORRECTION_START, [ACAMERA_COLOR_CORRECTION_TRANSFORM](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-color-correction-transform) =

acamera_metadata_section_start.ACAMERA_COLOR_CORRECTION_START + 1, [ACAMERA_COLOR_CORRECTION_GAINS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-color-correction-gains) =

acamera_metadata_section_start.ACAMERA_COLOR_CORRECTION_START + 2, [ACAMERA_COLOR_CORRECTION_ABERRATION_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-color-correction-aberration-mode) =

acamera_metadata_section_start.ACAMERA_COLOR_CORRECTION_START + 3, [ACAMERA_COLOR_CORRECTION_AVAILABLE_ABERRATION_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-color-correction-available-aberration-modes) =

acamera_metadata_section_start.ACAMERA_COLOR_CORRECTION_START + 4, [ACAMERA_COLOR_CORRECTION_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-color-correction-end), [ACAMERA_CONTROL_AE_ANTIBANDING_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-ae-antibanding-mode) =

acamera_metadata_section_start.ACAMERA_CONTROL_START, [ACAMERA_CONTROL_AE_EXPOSURE_COMPENSATION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-ae-exposure-compensation) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 1, [ACAMERA_CONTROL_AE_LOCK](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-ae-lock) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 2, [ACAMERA_CONTROL_AE_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-ae-mode) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 3, [ACAMERA_CONTROL_AE_REGIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-ae-regions) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 4, [ACAMERA_CONTROL_AE_TARGET_FPS_RANGE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-ae-target-fps-range) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 5, [ACAMERA_CONTROL_AE_PRECAPTURE_TRIGGER](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-ae-precapture-trigger) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 6, [ACAMERA_CONTROL_AF_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-af-mode) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 7, [ACAMERA_CONTROL_AF_REGIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-af-regions) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 8, [ACAMERA_CONTROL_AF_TRIGGER](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-af-trigger) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 9, [ACAMERA_CONTROL_AWB_LOCK](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-awb-lock) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 10, [ACAMERA_CONTROL_AWB_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-awb-mode) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 11, [ACAMERA_CONTROL_AWB_REGIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-awb-regions) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 12, [ACAMERA_CONTROL_CAPTURE_INTENT](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-capture-intent) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 13, [ACAMERA_CONTROL_EFFECT_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-effect-mode) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 14, [ACAMERA_CONTROL_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-mode) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 15, [ACAMERA_CONTROL_SCENE_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-scene-mode) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 16, [ACAMERA_CONTROL_VIDEO_STABILIZATION_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-video-stabilization-mode) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 17, [ACAMERA_CONTROL_AE_AVAILABLE_ANTIBANDING_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-ae-available-antibanding-modes) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 18, [ACAMERA_CONTROL_AE_AVAILABLE_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-ae-available-modes) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 19, [ACAMERA_CONTROL_AE_AVAILABLE_TARGET_FPS_RANGES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-ae-available-target-fps-ranges) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 20, [ACAMERA_CONTROL_AE_COMPENSATION_RANGE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-ae-compensation-range) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 21, [ACAMERA_CONTROL_AE_COMPENSATION_STEP](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-ae-compensation-step) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 22, [ACAMERA_CONTROL_AF_AVAILABLE_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-af-available-modes) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 23, [ACAMERA_CONTROL_AVAILABLE_EFFECTS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-available-effects) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 24, [ACAMERA_CONTROL_AVAILABLE_SCENE_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-available-scene-modes) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 25, [ACAMERA_CONTROL_AVAILABLE_VIDEO_STABILIZATION_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-available-video-stabilization-modes) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 26, [ACAMERA_CONTROL_AWB_AVAILABLE_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-awb-available-modes) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 27, [ACAMERA_CONTROL_MAX_REGIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-max-regions) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 28, [ACAMERA_CONTROL_AE_STATE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-ae-state) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 31, [ACAMERA_CONTROL_AF_STATE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-af-state) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 32, [ACAMERA_CONTROL_AWB_STATE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-awb-state) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 34, [ACAMERA_CONTROL_AE_LOCK_AVAILABLE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-ae-lock-available) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 36, [ACAMERA_CONTROL_AWB_LOCK_AVAILABLE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-awb-lock-available) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 37, [ACAMERA_CONTROL_AVAILABLE_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-available-modes) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 38, [ACAMERA_CONTROL_POST_RAW_SENSITIVITY_BOOST_RANGE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-post-raw-sensitivity-boost-range) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 39, [ACAMERA_CONTROL_POST_RAW_SENSITIVITY_BOOST](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-post-raw-sensitivity-boost) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 40, [ACAMERA_CONTROL_ENABLE_ZSL](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-enable-zsl) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 41, [ACAMERA_CONTROL_AF_SCENE_CHANGE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-af-scene-change) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 42, [ACAMERA_CONTROL_AVAILABLE_EXTENDED_SCENE_MODE_MAX_SIZES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-available-extended-scene-mode-max-sizes) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 43, [ACAMERA_CONTROL_AVAILABLE_EXTENDED_SCENE_MODE_ZOOM_RATIO_RANGES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-available-extended-scene-mode-zoom-ratio-ranges) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 44, [ACAMERA_CONTROL_EXTENDED_SCENE_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-extended-scene-mode) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 45, [ACAMERA_CONTROL_ZOOM_RATIO_RANGE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-zoom-ratio-range) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 46, [ACAMERA_CONTROL_ZOOM_RATIO](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-zoom-ratio) =

acamera_metadata_section_start.ACAMERA_CONTROL_START + 47, [ACAMERA_CONTROL_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-control-end), [ACAMERA_EDGE_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-edge-mode) =

acamera_metadata_section_start.ACAMERA_EDGE_START, [ACAMERA_EDGE_AVAILABLE_EDGE_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-edge-available-edge-modes) =

acamera_metadata_section_start.ACAMERA_EDGE_START + 2, [ACAMERA_EDGE_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-edge-end), [ACAMERA_FLASH_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-flash-mode) =

acamera_metadata_section_start.ACAMERA_FLASH_START + 2, [ACAMERA_FLASH_STATE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-flash-state) =

acamera_metadata_section_start.ACAMERA_FLASH_START + 5, [ACAMERA_FLASH_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-flash-end), [ACAMERA_FLASH_INFO_AVAILABLE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-flash-info-available) =

acamera_metadata_section_start.ACAMERA_FLASH_INFO_START, [ACAMERA_FLASH_INFO_STRENGTH_MAXIMUM_LEVEL](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-flash-info-strength-maximum-level) =

acamera_metadata_section_start.ACAMERA_FLASH_INFO_START + 2, [ACAMERA_FLASH_INFO_STRENGTH_DEFAULT_LEVEL](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-flash-info-strength-default-level) =

acamera_metadata_section_start.ACAMERA_FLASH_INFO_START + 3, [ACAMERA_FLASH_INFO_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-flash-info-end), [ACAMERA_HOT_PIXEL_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-hot-pixel-mode) =

acamera_metadata_section_start.ACAMERA_HOT_PIXEL_START, [ACAMERA_HOT_PIXEL_AVAILABLE_HOT_PIXEL_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-hot-pixel-available-hot-pixel-modes) =

acamera_metadata_section_start.ACAMERA_HOT_PIXEL_START + 1, [ACAMERA_HOT_PIXEL_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-hot-pixel-end), [ACAMERA_JPEG_GPS_COORDINATES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-jpeg-gps-coordinates) =

acamera_metadata_section_start.ACAMERA_JPEG_START, [ACAMERA_JPEG_GPS_PROCESSING_METHOD](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-jpeg-gps-processing-method) =

acamera_metadata_section_start.ACAMERA_JPEG_START + 1, [ACAMERA_JPEG_GPS_TIMESTAMP](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-jpeg-gps-timestamp) =

acamera_metadata_section_start.ACAMERA_JPEG_START + 2, [ACAMERA_JPEG_ORIENTATION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-jpeg-orientation) =

acamera_metadata_section_start.ACAMERA_JPEG_START + 3, [ACAMERA_JPEG_QUALITY](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-jpeg-quality) =

acamera_metadata_section_start.ACAMERA_JPEG_START + 4, [ACAMERA_JPEG_THUMBNAIL_QUALITY](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-jpeg-thumbnail-quality) =

acamera_metadata_section_start.ACAMERA_JPEG_START + 5, [ACAMERA_JPEG_THUMBNAIL_SIZE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-jpeg-thumbnail-size) =

acamera_metadata_section_start.ACAMERA_JPEG_START + 6, [ACAMERA_JPEG_AVAILABLE_THUMBNAIL_SIZES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-jpeg-available-thumbnail-sizes) =

acamera_metadata_section_start.ACAMERA_JPEG_START + 7, [ACAMERA_JPEG_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-jpeg-end), [ACAMERA_LENS_APERTURE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-aperture) =

acamera_metadata_section_start.ACAMERA_LENS_START, [ACAMERA_LENS_FILTER_DENSITY](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-filter-density) =

acamera_metadata_section_start.ACAMERA_LENS_START + 1, [ACAMERA_LENS_FOCAL_LENGTH](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-focal-length) =

acamera_metadata_section_start.ACAMERA_LENS_START + 2, [ACAMERA_LENS_FOCUS_DISTANCE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-focus-distance) =

acamera_metadata_section_start.ACAMERA_LENS_START + 3, [ACAMERA_LENS_OPTICAL_STABILIZATION_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-optical-stabilization-mode) =

acamera_metadata_section_start.ACAMERA_LENS_START + 4, [ACAMERA_LENS_FACING](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-facing) =

acamera_metadata_section_start.ACAMERA_LENS_START + 5, [ACAMERA_LENS_POSE_ROTATION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-pose-rotation) =

acamera_metadata_section_start.ACAMERA_LENS_START + 6, [ACAMERA_LENS_POSE_TRANSLATION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-pose-translation) =

acamera_metadata_section_start.ACAMERA_LENS_START + 7, [ACAMERA_LENS_FOCUS_RANGE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-focus-range) =

acamera_metadata_section_start.ACAMERA_LENS_START + 8, [ACAMERA_LENS_STATE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-state) =

acamera_metadata_section_start.ACAMERA_LENS_START + 9, [ACAMERA_LENS_INTRINSIC_CALIBRATION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-intrinsic-calibration) =

acamera_metadata_section_start.ACAMERA_LENS_START + 10, [ACAMERA_LENS_RADIAL_DISTORTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-radial-distortion) =

acamera_metadata_section_start.ACAMERA_LENS_START + 11, [ACAMERA_LENS_POSE_REFERENCE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-pose-reference) =

acamera_metadata_section_start.ACAMERA_LENS_START + 12, [ACAMERA_LENS_DISTORTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-distortion) =

acamera_metadata_section_start.ACAMERA_LENS_START + 13, [ACAMERA_LENS_DISTORTION_MAXIMUM_RESOLUTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-distortion-maximum-resolution) =

acamera_metadata_section_start.ACAMERA_LENS_START + 14, [ACAMERA_LENS_INTRINSIC_CALIBRATION_MAXIMUM_RESOLUTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-intrinsic-calibration-maximum-resolution) =

acamera_metadata_section_start.ACAMERA_LENS_START + 15, [ACAMERA_LENS_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-end), [ACAMERA_LENS_INFO_AVAILABLE_APERTURES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-info-available-apertures) =

acamera_metadata_section_start.ACAMERA_LENS_INFO_START, [ACAMERA_LENS_INFO_AVAILABLE_FILTER_DENSITIES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-info-available-filter-densities) =

acamera_metadata_section_start.ACAMERA_LENS_INFO_START + 1, [ACAMERA_LENS_INFO_AVAILABLE_FOCAL_LENGTHS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-info-available-focal-lengths) =

acamera_metadata_section_start.ACAMERA_LENS_INFO_START + 2, [ACAMERA_LENS_INFO_AVAILABLE_OPTICAL_STABILIZATION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-info-available-optical-stabilization) =

acamera_metadata_section_start.ACAMERA_LENS_INFO_START + 3, [ACAMERA_LENS_INFO_HYPERFOCAL_DISTANCE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-info-hyperfocal-distance) =

acamera_metadata_section_start.ACAMERA_LENS_INFO_START + 4, [ACAMERA_LENS_INFO_MINIMUM_FOCUS_DISTANCE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-info-minimum-focus-distance) =

acamera_metadata_section_start.ACAMERA_LENS_INFO_START + 5, [ACAMERA_LENS_INFO_SHADING_MAP_SIZE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-info-shading-map-size) =

acamera_metadata_section_start.ACAMERA_LENS_INFO_START + 6, [ACAMERA_LENS_INFO_FOCUS_DISTANCE_CALIBRATION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-info-focus-distance-calibration) =

acamera_metadata_section_start.ACAMERA_LENS_INFO_START + 7, [ACAMERA_LENS_INFO_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-lens-info-end), [ACAMERA_NOISE_REDUCTION_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-noise-reduction-mode) =

acamera_metadata_section_start.ACAMERA_NOISE_REDUCTION_START, [ACAMERA_NOISE_REDUCTION_AVAILABLE_NOISE_REDUCTION_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-noise-reduction-available-noise-reduction-modes) =

acamera_metadata_section_start.ACAMERA_NOISE_REDUCTION_START + 2, [ACAMERA_NOISE_REDUCTION_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-noise-reduction-end), [ACAMERA_REQUEST_MAX_NUM_OUTPUT_STREAMS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-request-max-num-output-streams) =

acamera_metadata_section_start.ACAMERA_REQUEST_START + 6, [ACAMERA_REQUEST_PIPELINE_DEPTH](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-request-pipeline-depth) =

acamera_metadata_section_start.ACAMERA_REQUEST_START + 9, [ACAMERA_REQUEST_PIPELINE_MAX_DEPTH](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-request-pipeline-max-depth) =

acamera_metadata_section_start.ACAMERA_REQUEST_START + 10, [ACAMERA_REQUEST_PARTIAL_RESULT_COUNT](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-request-partial-result-count) =

acamera_metadata_section_start.ACAMERA_REQUEST_START + 11, [ACAMERA_REQUEST_AVAILABLE_CAPABILITIES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-request-available-capabilities) =

acamera_metadata_section_start.ACAMERA_REQUEST_START + 12, [ACAMERA_REQUEST_AVAILABLE_REQUEST_KEYS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-request-available-request-keys) =

acamera_metadata_section_start.ACAMERA_REQUEST_START + 13, [ACAMERA_REQUEST_AVAILABLE_RESULT_KEYS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-request-available-result-keys) =

acamera_metadata_section_start.ACAMERA_REQUEST_START + 14, [ACAMERA_REQUEST_AVAILABLE_CHARACTERISTICS_KEYS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-request-available-characteristics-keys) =

acamera_metadata_section_start.ACAMERA_REQUEST_START + 15, [ACAMERA_REQUEST_AVAILABLE_SESSION_KEYS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-request-available-session-keys) =

acamera_metadata_section_start.ACAMERA_REQUEST_START + 16, [ACAMERA_REQUEST_AVAILABLE_PHYSICAL_CAMERA_REQUEST_KEYS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-request-available-physical-camera-request-keys) =

acamera_metadata_section_start.ACAMERA_REQUEST_START + 17, [ACAMERA_REQUEST_AVAILABLE_DYNAMIC_RANGE_PROFILES_MAP](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-request-available-dynamic-range-profiles-map) =

acamera_metadata_section_start.ACAMERA_REQUEST_START + 19, [ACAMERA_REQUEST_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-request-end), [ACAMERA_SCALER_CROP_REGION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-crop-region) =

acamera_metadata_section_start.ACAMERA_SCALER_START, [ACAMERA_SCALER_AVAILABLE_MAX_DIGITAL_ZOOM](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-available-max-digital-zoom) =

acamera_metadata_section_start.ACAMERA_SCALER_START + 4, [ACAMERA_SCALER_AVAILABLE_STREAM_CONFIGURATIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-available-stream-configurations) =

acamera_metadata_section_start.ACAMERA_SCALER_START + 10, [ACAMERA_SCALER_AVAILABLE_MIN_FRAME_DURATIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-available-min-frame-durations) =

acamera_metadata_section_start.ACAMERA_SCALER_START + 11, [ACAMERA_SCALER_AVAILABLE_STALL_DURATIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-available-stall-durations) =

acamera_metadata_section_start.ACAMERA_SCALER_START + 12, [ACAMERA_SCALER_CROPPING_TYPE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-cropping-type) =

acamera_metadata_section_start.ACAMERA_SCALER_START + 13, [ACAMERA_SCALER_AVAILABLE_RECOMMENDED_STREAM_CONFIGURATIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-available-recommended-stream-configurations) =

acamera_metadata_section_start.ACAMERA_SCALER_START + 14, [ACAMERA_SCALER_AVAILABLE_RECOMMENDED_INPUT_OUTPUT_FORMATS_MAP](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-available-recommended-input-output-formats-map) =

acamera_metadata_section_start.ACAMERA_SCALER_START + 15, [ACAMERA_SCALER_AVAILABLE_ROTATE_AND_CROP_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-available-rotate-and-crop-modes) =

acamera_metadata_section_start.ACAMERA_SCALER_START + 16, [ACAMERA_SCALER_ROTATE_AND_CROP](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-rotate-and-crop) =

acamera_metadata_section_start.ACAMERA_SCALER_START + 17, [ACAMERA_SCALER_DEFAULT_SECURE_IMAGE_SIZE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-default-secure-image-size) =

acamera_metadata_section_start.ACAMERA_SCALER_START + 18, [ACAMERA_SCALER_PHYSICAL_CAMERA_MULTI_RESOLUTION_STREAM_CONFIGURATIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-physical-camera-multi-resolution-stream-configurations) =

acamera_metadata_section_start.ACAMERA_SCALER_START + 19, [ACAMERA_SCALER_AVAILABLE_STREAM_CONFIGURATIONS_MAXIMUM_RESOLUTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-available-stream-configurations-maximum-resolution) =

acamera_metadata_section_start.ACAMERA_SCALER_START + 20, [ACAMERA_SCALER_AVAILABLE_MIN_FRAME_DURATIONS_MAXIMUM_RESOLUTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-available-min-frame-durations-maximum-resolution) =

acamera_metadata_section_start.ACAMERA_SCALER_START + 21, [ACAMERA_SCALER_AVAILABLE_STALL_DURATIONS_MAXIMUM_RESOLUTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-available-stall-durations-maximum-resolution) =

acamera_metadata_section_start.ACAMERA_SCALER_START + 22, [ACAMERA_SCALER_MULTI_RESOLUTION_STREAM_SUPPORTED](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-multi-resolution-stream-supported) =

acamera_metadata_section_start.ACAMERA_SCALER_START + 24, [ACAMERA_SCALER_AVAILABLE_STREAM_USE_CASES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-available-stream-use-cases) =

acamera_metadata_section_start.ACAMERA_SCALER_START + 25, [ACAMERA_SCALER_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-scaler-end), [ACAMERA_SENSOR_EXPOSURE_TIME](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-exposure-time) =

acamera_metadata_section_start.ACAMERA_SENSOR_START, [ACAMERA_SENSOR_FRAME_DURATION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-frame-duration) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 1, [ACAMERA_SENSOR_SENSITIVITY](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-sensitivity) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 2, [ACAMERA_SENSOR_REFERENCE_ILLUMINANT1](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-reference-illuminant1) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 3, [ACAMERA_SENSOR_REFERENCE_ILLUMINANT2](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-reference-illuminant2) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 4, [ACAMERA_SENSOR_CALIBRATION_TRANSFORM1](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-calibration-transform1) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 5, [ACAMERA_SENSOR_CALIBRATION_TRANSFORM2](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-calibration-transform2) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 6, [ACAMERA_SENSOR_COLOR_TRANSFORM1](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-color-transform1) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 7, [ACAMERA_SENSOR_COLOR_TRANSFORM2](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-color-transform2) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 8, [ACAMERA_SENSOR_FORWARD_MATRIX1](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-forward-matrix1) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 9, [ACAMERA_SENSOR_FORWARD_MATRIX2](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-forward-matrix2) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 10, [ACAMERA_SENSOR_BLACK_LEVEL_PATTERN](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-black-level-pattern) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 12, [ACAMERA_SENSOR_MAX_ANALOG_SENSITIVITY](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-max-analog-sensitivity) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 13, [ACAMERA_SENSOR_ORIENTATION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-orientation) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 14, [ACAMERA_SENSOR_TIMESTAMP](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-timestamp) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 16, [ACAMERA_SENSOR_NEUTRAL_COLOR_POINT](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-neutral-color-point) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 18, [ACAMERA_SENSOR_NOISE_PROFILE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-noise-profile) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 19, [ACAMERA_SENSOR_GREEN_SPLIT](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-green-split) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 22, [ACAMERA_SENSOR_TEST_PATTERN_DATA](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-test-pattern-data) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 23, [ACAMERA_SENSOR_TEST_PATTERN_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-test-pattern-mode) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 24, [ACAMERA_SENSOR_AVAILABLE_TEST_PATTERN_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-available-test-pattern-modes) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 25, [ACAMERA_SENSOR_ROLLING_SHUTTER_SKEW](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-rolling-shutter-skew) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 26, [ACAMERA_SENSOR_OPTICAL_BLACK_REGIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-optical-black-regions) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 27, [ACAMERA_SENSOR_DYNAMIC_BLACK_LEVEL](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-dynamic-black-level) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 28, [ACAMERA_SENSOR_DYNAMIC_WHITE_LEVEL](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-dynamic-white-level) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 29, [ACAMERA_SENSOR_PIXEL_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-pixel-mode) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 32, [ACAMERA_SENSOR_RAW_BINNING_FACTOR_USED](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-raw-binning-factor-used) =

acamera_metadata_section_start.ACAMERA_SENSOR_START + 33, [ACAMERA_SENSOR_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-end), [ACAMERA_SENSOR_INFO_ACTIVE_ARRAY_SIZE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-info-active-array-size) =

acamera_metadata_section_start.ACAMERA_SENSOR_INFO_START, [ACAMERA_SENSOR_INFO_SENSITIVITY_RANGE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-info-sensitivity-range) =

acamera_metadata_section_start.ACAMERA_SENSOR_INFO_START + 1, [ACAMERA_SENSOR_INFO_COLOR_FILTER_ARRANGEMENT](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-info-color-filter-arrangement) =

acamera_metadata_section_start.ACAMERA_SENSOR_INFO_START + 2, [ACAMERA_SENSOR_INFO_EXPOSURE_TIME_RANGE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-info-exposure-time-range) =

acamera_metadata_section_start.ACAMERA_SENSOR_INFO_START + 3, [ACAMERA_SENSOR_INFO_MAX_FRAME_DURATION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-info-max-frame-duration) =

acamera_metadata_section_start.ACAMERA_SENSOR_INFO_START + 4, [ACAMERA_SENSOR_INFO_PHYSICAL_SIZE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-info-physical-size) =

acamera_metadata_section_start.ACAMERA_SENSOR_INFO_START + 5, [ACAMERA_SENSOR_INFO_PIXEL_ARRAY_SIZE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-info-pixel-array-size) =

acamera_metadata_section_start.ACAMERA_SENSOR_INFO_START + 6, [ACAMERA_SENSOR_INFO_WHITE_LEVEL](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-info-white-level) =

acamera_metadata_section_start.ACAMERA_SENSOR_INFO_START + 7, [ACAMERA_SENSOR_INFO_TIMESTAMP_SOURCE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-info-timestamp-source) =

acamera_metadata_section_start.ACAMERA_SENSOR_INFO_START + 8, [ACAMERA_SENSOR_INFO_LENS_SHADING_APPLIED](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-info-lens-shading-applied) =

acamera_metadata_section_start.ACAMERA_SENSOR_INFO_START + 9, [ACAMERA_SENSOR_INFO_PRE_CORRECTION_ACTIVE_ARRAY_SIZE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-info-pre-correction-active-array-size) =

acamera_metadata_section_start.ACAMERA_SENSOR_INFO_START + 10, [ACAMERA_SENSOR_INFO_ACTIVE_ARRAY_SIZE_MAXIMUM_RESOLUTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-info-active-array-size-maximum-resolution) =

acamera_metadata_section_start.ACAMERA_SENSOR_INFO_START + 11, [ACAMERA_SENSOR_INFO_PIXEL_ARRAY_SIZE_MAXIMUM_RESOLUTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-info-pixel-array-size-maximum-resolution) =

acamera_metadata_section_start.ACAMERA_SENSOR_INFO_START + 12, [ACAMERA_SENSOR_INFO_PRE_CORRECTION_ACTIVE_ARRAY_SIZE_MAXIMUM_RESOLUTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-info-pre-correction-active-array-size-maximum-resolution) =

acamera_metadata_section_start.ACAMERA_SENSOR_INFO_START + 13, [ACAMERA_SENSOR_INFO_BINNING_FACTOR](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-info-binning-factor) =

acamera_metadata_section_start.ACAMERA_SENSOR_INFO_START + 14, [ACAMERA_SENSOR_INFO_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sensor-info-end), [ACAMERA_SHADING_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-shading-mode) =

acamera_metadata_section_start.ACAMERA_SHADING_START, [ACAMERA_SHADING_AVAILABLE_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-shading-available-modes) =

acamera_metadata_section_start.ACAMERA_SHADING_START + 2, [ACAMERA_SHADING_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-shading-end), [ACAMERA_STATISTICS_FACE_DETECT_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-face-detect-mode) =

acamera_metadata_section_start.ACAMERA_STATISTICS_START, [ACAMERA_STATISTICS_HOT_PIXEL_MAP_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-hot-pixel-map-mode) =

acamera_metadata_section_start.ACAMERA_STATISTICS_START + 3, [ACAMERA_STATISTICS_FACE_IDS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-face-ids) =

acamera_metadata_section_start.ACAMERA_STATISTICS_START + 4, [ACAMERA_STATISTICS_FACE_LANDMARKS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-face-landmarks) =

acamera_metadata_section_start.ACAMERA_STATISTICS_START + 5, [ACAMERA_STATISTICS_FACE_RECTANGLES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-face-rectangles) =

acamera_metadata_section_start.ACAMERA_STATISTICS_START + 6, [ACAMERA_STATISTICS_FACE_SCORES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-face-scores) =

acamera_metadata_section_start.ACAMERA_STATISTICS_START + 7, [ACAMERA_STATISTICS_LENS_SHADING_MAP](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-lens-shading-map) =

acamera_metadata_section_start.ACAMERA_STATISTICS_START + 11, [ACAMERA_STATISTICS_SCENE_FLICKER](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-scene-flicker) =

acamera_metadata_section_start.ACAMERA_STATISTICS_START + 14, [ACAMERA_STATISTICS_HOT_PIXEL_MAP](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-hot-pixel-map) =

acamera_metadata_section_start.ACAMERA_STATISTICS_START + 15, [ACAMERA_STATISTICS_LENS_SHADING_MAP_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-lens-shading-map-mode) =

acamera_metadata_section_start.ACAMERA_STATISTICS_START + 16, [ACAMERA_STATISTICS_OIS_DATA_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-ois-data-mode) =

acamera_metadata_section_start.ACAMERA_STATISTICS_START + 17, [ACAMERA_STATISTICS_OIS_TIMESTAMPS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-ois-timestamps) =

acamera_metadata_section_start.ACAMERA_STATISTICS_START + 18, [ACAMERA_STATISTICS_OIS_X_SHIFTS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-ois-x-shifts) =

acamera_metadata_section_start.ACAMERA_STATISTICS_START + 19, [ACAMERA_STATISTICS_OIS_Y_SHIFTS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-ois-y-shifts) =

acamera_metadata_section_start.ACAMERA_STATISTICS_START + 20, [ACAMERA_STATISTICS_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-end), [ACAMERA_STATISTICS_INFO_AVAILABLE_FACE_DETECT_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-info-available-face-detect-modes) =

acamera_metadata_section_start.ACAMERA_STATISTICS_INFO_START, [ACAMERA_STATISTICS_INFO_MAX_FACE_COUNT](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-info-max-face-count) =

acamera_metadata_section_start.ACAMERA_STATISTICS_INFO_START + 2, [ACAMERA_STATISTICS_INFO_AVAILABLE_HOT_PIXEL_MAP_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-info-available-hot-pixel-map-modes) =

acamera_metadata_section_start.ACAMERA_STATISTICS_INFO_START + 6, [ACAMERA_STATISTICS_INFO_AVAILABLE_LENS_SHADING_MAP_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-info-available-lens-shading-map-modes) =

acamera_metadata_section_start.ACAMERA_STATISTICS_INFO_START + 7, [ACAMERA_STATISTICS_INFO_AVAILABLE_OIS_DATA_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-info-available-ois-data-modes) =

acamera_metadata_section_start.ACAMERA_STATISTICS_INFO_START + 8, [ACAMERA_STATISTICS_INFO_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-statistics-info-end), [ACAMERA_TONEMAP_CURVE_BLUE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-tonemap-curve-blue) =

acamera_metadata_section_start.ACAMERA_TONEMAP_START, [ACAMERA_TONEMAP_CURVE_GREEN](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-tonemap-curve-green) =

acamera_metadata_section_start.ACAMERA_TONEMAP_START + 1, [ACAMERA_TONEMAP_CURVE_RED](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-tonemap-curve-red) =

acamera_metadata_section_start.ACAMERA_TONEMAP_START + 2, [ACAMERA_TONEMAP_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-tonemap-mode) =

acamera_metadata_section_start.ACAMERA_TONEMAP_START + 3, [ACAMERA_TONEMAP_MAX_CURVE_POINTS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-tonemap-max-curve-points) =

acamera_metadata_section_start.ACAMERA_TONEMAP_START + 4, [ACAMERA_TONEMAP_AVAILABLE_TONE_MAP_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-tonemap-available-tone-map-modes) =

acamera_metadata_section_start.ACAMERA_TONEMAP_START + 5, [ACAMERA_TONEMAP_GAMMA](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-tonemap-gamma) =

acamera_metadata_section_start.ACAMERA_TONEMAP_START + 6, [ACAMERA_TONEMAP_PRESET_CURVE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-tonemap-preset-curve) =

acamera_metadata_section_start.ACAMERA_TONEMAP_START + 7, [ACAMERA_TONEMAP_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-tonemap-end), [ACAMERA_INFO_SUPPORTED_HARDWARE_LEVEL](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-info-supported-hardware-level) =

acamera_metadata_section_start.ACAMERA_INFO_START, [ACAMERA_INFO_VERSION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-info-version) =

acamera_metadata_section_start.ACAMERA_INFO_START + 1, [ACAMERA_INFO_DEVICE_STATE_ORIENTATIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-info-device-state-orientations) =

acamera_metadata_section_start.ACAMERA_INFO_START + 3, [ACAMERA_INFO_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-info-end), [ACAMERA_BLACK_LEVEL_LOCK](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-black-level-lock) =

acamera_metadata_section_start.ACAMERA_BLACK_LEVEL_START, [ACAMERA_BLACK_LEVEL_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-black-level-end), [ACAMERA_SYNC_FRAME_NUMBER](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sync-frame-number) =

acamera_metadata_section_start.ACAMERA_SYNC_START, [ACAMERA_SYNC_MAX_LATENCY](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sync-max-latency) =

acamera_metadata_section_start.ACAMERA_SYNC_START + 1, [ACAMERA_SYNC_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-sync-end), [ACAMERA_DEPTH_AVAILABLE_DEPTH_STREAM_CONFIGURATIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-depth-available-depth-stream-configurations) =

acamera_metadata_section_start.ACAMERA_DEPTH_START + 1, [ACAMERA_DEPTH_AVAILABLE_DEPTH_MIN_FRAME_DURATIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-depth-available-depth-min-frame-durations) =

acamera_metadata_section_start.ACAMERA_DEPTH_START + 2, [ACAMERA_DEPTH_AVAILABLE_DEPTH_STALL_DURATIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-depth-available-depth-stall-durations) =

acamera_metadata_section_start.ACAMERA_DEPTH_START + 3, [ACAMERA_DEPTH_DEPTH_IS_EXCLUSIVE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-depth-depth-is-exclusive) =

acamera_metadata_section_start.ACAMERA_DEPTH_START + 4, [ACAMERA_DEPTH_AVAILABLE_RECOMMENDED_DEPTH_STREAM_CONFIGURATIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-depth-available-recommended-depth-stream-configurations) =

acamera_metadata_section_start.ACAMERA_DEPTH_START + 5, [ACAMERA_DEPTH_AVAILABLE_DYNAMIC_DEPTH_STREAM_CONFIGURATIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-depth-available-dynamic-depth-stream-configurations) =

acamera_metadata_section_start.ACAMERA_DEPTH_START + 6, [ACAMERA_DEPTH_AVAILABLE_DYNAMIC_DEPTH_MIN_FRAME_DURATIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-depth-available-dynamic-depth-min-frame-durations) =

acamera_metadata_section_start.ACAMERA_DEPTH_START + 7, [ACAMERA_DEPTH_AVAILABLE_DYNAMIC_DEPTH_STALL_DURATIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-depth-available-dynamic-depth-stall-durations) =

acamera_metadata_section_start.ACAMERA_DEPTH_START + 8, [ACAMERA_DEPTH_AVAILABLE_DEPTH_STREAM_CONFIGURATIONS_MAXIMUM_RESOLUTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-depth-available-depth-stream-configurations-maximum-resolution) =

acamera_metadata_section_start.ACAMERA_DEPTH_START + 9, [ACAMERA_DEPTH_AVAILABLE_DEPTH_MIN_FRAME_DURATIONS_MAXIMUM_RESOLUTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-depth-available-depth-min-frame-durations-maximum-resolution) =

acamera_metadata_section_start.ACAMERA_DEPTH_START + 10, [ACAMERA_DEPTH_AVAILABLE_DEPTH_STALL_DURATIONS_MAXIMUM_RESOLUTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-depth-available-depth-stall-durations-maximum-resolution) =

acamera_metadata_section_start.ACAMERA_DEPTH_START + 11, [ACAMERA_DEPTH_AVAILABLE_DYNAMIC_DEPTH_STREAM_CONFIGURATIONS_MAXIMUM_RESOLUTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-depth-available-dynamic-depth-stream-configurations-maximum-resolution) =

acamera_metadata_section_start.ACAMERA_DEPTH_START + 12, [ACAMERA_DEPTH_AVAILABLE_DYNAMIC_DEPTH_MIN_FRAME_DURATIONS_MAXIMUM_RESOLUTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-depth-available-dynamic-depth-min-frame-durations-maximum-resolution) =

acamera_metadata_section_start.ACAMERA_DEPTH_START + 13, [ACAMERA_DEPTH_AVAILABLE_DYNAMIC_DEPTH_STALL_DURATIONS_MAXIMUM_RESOLUTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-depth-available-dynamic-depth-stall-durations-maximum-resolution) =

acamera_metadata_section_start.ACAMERA_DEPTH_START + 14, [ACAMERA_DEPTH_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-depth-end), [ACAMERA_LOGICAL_MULTI_CAMERA_PHYSICAL_IDS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-logical-multi-camera-physical-ids) =

acamera_metadata_section_start.ACAMERA_LOGICAL_MULTI_CAMERA_START, [ACAMERA_LOGICAL_MULTI_CAMERA_SENSOR_SYNC_TYPE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-logical-multi-camera-sensor-sync-type) =

acamera_metadata_section_start.ACAMERA_LOGICAL_MULTI_CAMERA_START + 1, [ACAMERA_LOGICAL_MULTI_CAMERA_ACTIVE_PHYSICAL_ID](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-logical-multi-camera-active-physical-id) =

acamera_metadata_section_start.ACAMERA_LOGICAL_MULTI_CAMERA_START + 2, [ACAMERA_LOGICAL_MULTI_CAMERA_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-logical-multi-camera-end), [ACAMERA_DISTORTION_CORRECTION_MODE](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-distortion-correction-mode) =

acamera_metadata_section_start.ACAMERA_DISTORTION_CORRECTION_START, [ACAMERA_DISTORTION_CORRECTION_AVAILABLE_MODES](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-distortion-correction-available-modes) =

acamera_metadata_section_start.ACAMERA_DISTORTION_CORRECTION_START + 1, [ACAMERA_DISTORTION_CORRECTION_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-distortion-correction-end), [ACAMERA_HEIC_AVAILABLE_HEIC_STREAM_CONFIGURATIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-heic-available-heic-stream-configurations) =

acamera_metadata_section_start.ACAMERA_HEIC_START, [ACAMERA_HEIC_AVAILABLE_HEIC_MIN_FRAME_DURATIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-heic-available-heic-min-frame-durations) =

acamera_metadata_section_start.ACAMERA_HEIC_START + 1, [ACAMERA_HEIC_AVAILABLE_HEIC_STALL_DURATIONS](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-heic-available-heic-stall-durations) =

acamera_metadata_section_start.ACAMERA_HEIC_START + 2, [ACAMERA_HEIC_AVAILABLE_HEIC_STREAM_CONFIGURATIONS_MAXIMUM_RESOLUTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-heic-available-heic-stream-configurations-maximum-resolution) =

acamera_metadata_section_start.ACAMERA_HEIC_START + 3, [ACAMERA_HEIC_AVAILABLE_HEIC_MIN_FRAME_DURATIONS_MAXIMUM_RESOLUTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-heic-available-heic-min-frame-durations-maximum-resolution) =

acamera_metadata_section_start.ACAMERA_HEIC_START + 4, [ACAMERA_HEIC_AVAILABLE_HEIC_STALL_DURATIONS_MAXIMUM_RESOLUTION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-heic-available-heic-stall-durations-maximum-resolution) =

acamera_metadata_section_start.ACAMERA_HEIC_START + 5, [ACAMERA_HEIC_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-heic-end), [ACAMERA_AUTOMOTIVE_LOCATION](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-automotive-location) =

acamera_metadata_section_start.ACAMERA_AUTOMOTIVE_START, [ACAMERA_AUTOMOTIVE_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-automotive-end), [ACAMERA_AUTOMOTIVE_LENS_FACING](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-automotive-lens-facing) =

acamera_metadata_section_start.ACAMERA_AUTOMOTIVE_LENS_START, [ACAMERA_AUTOMOTIVE_LENS_END](/docs/21-Aug-2024/unity-api/api/MagicLeap/Android/NDK/Camera/Metadata#enums-acamera-automotive-lens-end)<br></br>}** |

| enum | acamera_metadata_enum_acamera_automotive_lens_facing

{

ACAMERA_AUTOMOTIVE_LENS_FACING_EXTERIOR_OTHER = 0, ACAMERA_AUTOMOTIVE_LENS_FACING_EXTERIOR_FRONT = 1, ACAMERA_AUTOMOTIVE_LENS_FACING_EXTERIOR_REAR = 2, ACAMERA_AUTOMOTIVE_LENS_FACING_EXTERIOR_LEFT = 3, ACAMERA_AUTOMOTIVE_LENS_FACING_EXTERIOR_RIGHT = 4, ACAMERA_AUTOMOTIVE_LENS_FACING_INTERIOR_OTHER = 5, ACAMERA_AUTOMOTIVE_LENS_FACING_INTERIOR_SEAT_ROW_1_LEFT = 6, ACAMERA_AUTOMOTIVE_LENS_FACING_INTERIOR_SEAT_ROW_1_CENTER = 7, ACAMERA_AUTOMOTIVE_LENS_FACING_INTERIOR_SEAT_ROW_1_RIGHT = 8, ACAMERA_AUTOMOTIVE_LENS_FACING_INTERIOR_SEAT_ROW_2_LEFT = 9, ACAMERA_AUTOMOTIVE_LENS_FACING_INTERIOR_SEAT_ROW_2_CENTER = 10, ACAMERA_AUTOMOTIVE_LENS_FACING_INTERIOR_SEAT_ROW_2_RIGHT = 11, ACAMERA_AUTOMOTIVE_LENS_FACING_INTERIOR_SEAT_ROW_3_LEFT = 12, ACAMERA_AUTOMOTIVE_LENS_FACING_INTERIOR_SEAT_ROW_3_CENTER = 13, ACAMERA_AUTOMOTIVE_LENS_FACING_INTERIOR_SEAT_ROW_3_RIGHT = 14

} |

| enum | acamera_metadata_enum_acamera_automotive_location

{

ACAMERA_AUTOMOTIVE_LOCATION_INTERIOR = 0, ACAMERA_AUTOMOTIVE_LOCATION_EXTERIOR_OTHER = 1, ACAMERA_AUTOMOTIVE_LOCATION_EXTERIOR_FRONT = 2, ACAMERA_AUTOMOTIVE_LOCATION_EXTERIOR_REAR = 3, ACAMERA_AUTOMOTIVE_LOCATION_EXTERIOR_LEFT = 4, ACAMERA_AUTOMOTIVE_LOCATION_EXTERIOR_RIGHT = 5, ACAMERA_AUTOMOTIVE_LOCATION_EXTRA_OTHER = 6, ACAMERA_AUTOMOTIVE_LOCATION_EXTRA_FRONT = 7, ACAMERA_AUTOMOTIVE_LOCATION_EXTRA_REAR = 8, ACAMERA_AUTOMOTIVE_LOCATION_EXTRA_LEFT = 9, ACAMERA_AUTOMOTIVE_LOCATION_EXTRA_RIGHT = 10

} |

| enum | acamera_metadata_enum_acamera_black_level_lock

{

ACAMERA_BLACK_LEVEL_LOCK_OFF = 0, ACAMERA_BLACK_LEVEL_LOCK_ON = 1

} |

| enum | acamera_metadata_enum_acamera_color_correction_aberration_mode

{

ACAMERA_COLOR_CORRECTION_ABERRATION_MODE_OFF = 0, ACAMERA_COLOR_CORRECTION_ABERRATION_MODE_FAST = 1, ACAMERA_COLOR_CORRECTION_ABERRATION_MODE_HIGH_QUALITY = 2

} |

| enum | acamera_metadata_enum_acamera_color_correction_mode

{

ACAMERA_COLOR_CORRECTION_MODE_TRANSFORM_MATRIX = 0, ACAMERA_COLOR_CORRECTION_MODE_FAST = 1, ACAMERA_COLOR_CORRECTION_MODE_HIGH_QUALITY = 2

} |

| enum | acamera_metadata_enum_acamera_control_ae_antibanding_mode

{

ACAMERA_CONTROL_AE_ANTIBANDING_MODE_OFF = 0, ACAMERA_CONTROL_AE_ANTIBANDING_MODE_50HZ = 1, ACAMERA_CONTROL_AE_ANTIBANDING_MODE_60HZ = 2, ACAMERA_CONTROL_AE_ANTIBANDING_MODE_AUTO = 3

} |

| enum | acamera_metadata_enum_acamera_control_ae_lock

{

ACAMERA_CONTROL_AE_LOCK_OFF = 0, ACAMERA_CONTROL_AE_LOCK_ON = 1

} |

| enum | acamera_metadata_enum_acamera_control_ae_lock_available

{

ACAMERA_CONTROL_AE_LOCK_AVAILABLE_FALSE = 0, ACAMERA_CONTROL_AE_LOCK_AVAILABLE_TRUE = 1

} |

| enum | acamera_metadata_enum_acamera_control_ae_mode

{

ACAMERA_CONTROL_AE_MODE_OFF = 0, ACAMERA_CONTROL_AE_MODE_ON = 1, ACAMERA_CONTROL_AE_MODE_ON_AUTO_FLASH = 2, ACAMERA_CONTROL_AE_MODE_ON_ALWAYS_FLASH = 3, ACAMERA_CONTROL_AE_MODE_ON_AUTO_FLASH_REDEYE = 4, ACAMERA_CONTROL_AE_MODE_ON_EXTERNAL_FLASH = 5

} |

| enum | acamera_metadata_enum_acamera_control_ae_precapture_trigger

{

ACAMERA_CONTROL_AE_PRECAPTURE_TRIGGER_IDLE = 0, ACAMERA_CONTROL_AE_PRECAPTURE_TRIGGER_START = 1, ACAMERA_CONTROL_AE_PRECAPTURE_TRIGGER_CANCEL = 2

} |

| enum | acamera_metadata_enum_acamera_control_ae_state

{

ACAMERA_CONTROL_AE_STATE_INACTIVE = 0, ACAMERA_CONTROL_AE_STATE_SEARCHING = 1, ACAMERA_CONTROL_AE_STATE_CONVERGED = 2, ACAMERA_CONTROL_AE_STATE_LOCKED = 3, ACAMERA_CONTROL_AE_STATE_FLASH_REQUIRED = 4, ACAMERA_CONTROL_AE_STATE_PRECAPTURE = 5

} |

| enum | acamera_metadata_enum_acamera_control_af_mode

{

ACAMERA_CONTROL_AF_MODE_OFF = 0, ACAMERA_CONTROL_AF_MODE_AUTO = 1, ACAMERA_CONTROL_AF_MODE_MACRO = 2, ACAMERA_CONTROL_AF_MODE_CONTINUOUS_VIDEO = 3, ACAMERA_CONTROL_AF_MODE_CONTINUOUS_PICTURE = 4, ACAMERA_CONTROL_AF_MODE_EDOF = 5

} |

| enum | acamera_metadata_enum_acamera_control_af_scene_change

{

ACAMERA_CONTROL_AF_SCENE_CHANGE_NOT_DETECTED = 0, ACAMERA_CONTROL_AF_SCENE_CHANGE_DETECTED = 1

} |

| enum | acamera_metadata_enum_acamera_control_af_state

{

ACAMERA_CONTROL_AF_STATE_INACTIVE = 0, ACAMERA_CONTROL_AF_STATE_PASSIVE_SCAN = 1, ACAMERA_CONTROL_AF_STATE_PASSIVE_FOCUSED = 2, ACAMERA_CONTROL_AF_STATE_ACTIVE_SCAN = 3, ACAMERA_CONTROL_AF_STATE_FOCUSED_LOCKED = 4, ACAMERA_CONTROL_AF_STATE_NOT_FOCUSED_LOCKED = 5, ACAMERA_CONTROL_AF_STATE_PASSIVE_UNFOCUSED = 6

} |

| enum | acamera_metadata_enum_acamera_control_af_trigger

{

ACAMERA_CONTROL_AF_TRIGGER_IDLE = 0, ACAMERA_CONTROL_AF_TRIGGER_START = 1, ACAMERA_CONTROL_AF_TRIGGER_CANCEL = 2

} |

| enum | acamera_metadata_enum_acamera_control_awb_lock

{

ACAMERA_CONTROL_AWB_LOCK_OFF = 0, ACAMERA_CONTROL_AWB_LOCK_ON = 1

} |

| enum | acamera_metadata_enum_acamera_control_awb_lock_available

{

ACAMERA_CONTROL_AWB_LOCK_AVAILABLE_FALSE = 0, ACAMERA_CONTROL_AWB_LOCK_AVAILABLE_TRUE = 1

} |

| enum | acamera_metadata_enum_acamera_control_awb_mode

{

ACAMERA_CONTROL_AWB_MODE_OFF = 0, ACAMERA_CONTROL_AWB_MODE_AUTO = 1, ACAMERA_CONTROL_AWB_MODE_INCANDESCENT = 2, ACAMERA_CONTROL_AWB_MODE_FLUORESCENT = 3, ACAMERA_CONTROL_AWB_MODE_WARM_FLUORESCENT = 4, ACAMERA_CONTROL_AWB_MODE_DAYLIGHT = 5, ACAMERA_CONTROL_AWB_MODE_CLOUDY_DAYLIGHT = 6, ACAMERA_CONTROL_AWB_MODE_TWILIGHT = 7, ACAMERA_CONTROL_AWB_MODE_SHADE = 8

} |

| enum | acamera_metadata_enum_acamera_control_awb_state

{

ACAMERA_CONTROL_AWB_STATE_INACTIVE = 0, ACAMERA_CONTROL_AWB_STATE_SEARCHING = 1, ACAMERA_CONTROL_AWB_STATE_CONVERGED = 2, ACAMERA_CONTROL_AWB_STATE_LOCKED = 3

} |

| enum | acamera_metadata_enum_acamera_control_capture_intent

{

ACAMERA_CONTROL_CAPTURE_INTENT_CUSTOM = 0, ACAMERA_CONTROL_CAPTURE_INTENT_PREVIEW = 1, ACAMERA_CONTROL_CAPTURE_INTENT_STILL_CAPTURE = 2, ACAMERA_CONTROL_CAPTURE_INTENT_VIDEO_RECORD = 3, ACAMERA_CONTROL_CAPTURE_INTENT_VIDEO_SNAPSHOT = 4, ACAMERA_CONTROL_CAPTURE_INTENT_ZERO_SHUTTER_LAG = 5, ACAMERA_CONTROL_CAPTURE_INTENT_MANUAL = 6, ACAMERA_CONTROL_CAPTURE_INTENT_MOTION_TRACKING = 7

} |

| enum | acamera_metadata_enum_acamera_control_effect_mode

{

ACAMERA_CONTROL_EFFECT_MODE_OFF = 0, ACAMERA_CONTROL_EFFECT_MODE_MONO = 1, ACAMERA_CONTROL_EFFECT_MODE_NEGATIVE = 2, ACAMERA_CONTROL_EFFECT_MODE_SOLARIZE = 3, ACAMERA_CONTROL_EFFECT_MODE_SEPIA = 4, ACAMERA_CONTROL_EFFECT_MODE_POSTERIZE = 5, ACAMERA_CONTROL_EFFECT_MODE_WHITEBOARD = 6, ACAMERA_CONTROL_EFFECT_MODE_BLACKBOARD = 7, ACAMERA_CONTROL_EFFECT_MODE_AQUA = 8

} |

| enum | acamera_metadata_enum_acamera_control_enable_zsl

{

ACAMERA_CONTROL_ENABLE_ZSL_FALSE = 0, ACAMERA_CONTROL_ENABLE_ZSL_TRUE = 1

} |

| enum | acamera_metadata_enum_acamera_control_extended_scene_mode

{

ACAMERA_CONTROL_EXTENDED_SCENE_MODE_DISABLED = 0, ACAMERA_CONTROL_EXTENDED_SCENE_MODE_BOKEH_STILL_CAPTURE = 1, ACAMERA_CONTROL_EXTENDED_SCENE_MODE_BOKEH_CONTINUOUS = 2

} |

| enum | acamera_metadata_enum_acamera_control_mode

{

ACAMERA_CONTROL_MODE_OFF = 0, ACAMERA_CONTROL_MODE_AUTO = 1, ACAMERA_CONTROL_MODE_USE_SCENE_MODE = 2, ACAMERA_CONTROL_MODE_OFF_KEEP_STATE = 3, ACAMERA_CONTROL_MODE_USE_EXTENDED_SCENE_MODE = 4

} |

| enum | acamera_metadata_enum_acamera_control_scene_mode

{

ACAMERA_CONTROL_SCENE_MODE_DISABLED = 0, ACAMERA_CONTROL_SCENE_MODE_FACE_PRIORITY = 1, ACAMERA_CONTROL_SCENE_MODE_ACTION = 2, ACAMERA_CONTROL_SCENE_MODE_PORTRAIT = 3, ACAMERA_CONTROL_SCENE_MODE_LANDSCAPE = 4, ACAMERA_CONTROL_SCENE_MODE_NIGHT = 5, ACAMERA_CONTROL_SCENE_MODE_NIGHT_PORTRAIT = 6, ACAMERA_CONTROL_SCENE_MODE_THEATRE = 7, ACAMERA_CONTROL_SCENE_MODE_BEACH = 8, ACAMERA_CONTROL_SCENE_MODE_SNOW = 9, ACAMERA_CONTROL_SCENE_MODE_SUNSET = 10, ACAMERA_CONTROL_SCENE_MODE_STEADYPHOTO = 11, ACAMERA_CONTROL_SCENE_MODE_FIREWORKS = 12, ACAMERA_CONTROL_SCENE_MODE_SPORTS = 13, ACAMERA_CONTROL_SCENE_MODE_PARTY = 14, ACAMERA_CONTROL_SCENE_MODE_CANDLELIGHT = 15, ACAMERA_CONTROL_SCENE_MODE_BARCODE = 16, ACAMERA_CONTROL_SCENE_MODE_HDR = 18

} |

| enum | acamera_metadata_enum_acamera_control_video_stabilization_mode

{

ACAMERA_CONTROL_VIDEO_STABILIZATION_MODE_OFF = 0, ACAMERA_CONTROL_VIDEO_STABILIZATION_MODE_ON = 1, ACAMERA_CONTROL_VIDEO_STABILIZATION_MODE_PREVIEW_STABILIZATION = 2

} |

| enum | acamera_metadata_enum_acamera_depth_available_depth_stream_configurations

{

ACAMERA_DEPTH_AVAILABLE_DEPTH_STREAM_CONFIGURATIONS_OUTPUT = 0, ACAMERA_DEPTH_AVAILABLE_DEPTH_STREAM_CONFIGURATIONS_INPUT = 1

} |

| enum | acamera_metadata_enum_acamera_depth_available_depth_stream_configurations_maximum_resolution

{

ACAMERA_DEPTH_AVAILABLE_DEPTH_STREAM_CONFIGURATIONS_MAXIMUM_RESOLUTION_OUTPUT = 0, ACAMERA_DEPTH_AVAILABLE_DEPTH_STREAM_CONFIGURATIONS_MAXIMUM_RESOLUTION_INPUT = 1

} |

| enum | acamera_metadata_enum_acamera_depth_available_dynamic_depth_stream_configurations

{

ACAMERA_DEPTH_AVAILABLE_DYNAMIC_DEPTH_STREAM_CONFIGURATIONS_OUTPUT = 0, ACAMERA_DEPTH_AVAILABLE_DYNAMIC_DEPTH_STREAM_CONFIGURATIONS_INPUT = 1

} |

| enum | acamera_metadata_enum_acamera_depth_available_dynamic_depth_stream_configurations_maximum_resolution

{

ACAMERA_DEPTH_AVAILABLE_DYNAMIC_DEPTH_STREAM_CONFIGURATIONS_MAXIMUM_RESOLUTION_OUTPUT = 0, ACAMERA_DEPTH_AVAILABLE_DYNAMIC_DEPTH_STREAM_CONFIGURATIONS_MAXIMUM_RESOLUTION_INPUT = 1

} |

| enum | acamera_metadata_enum_acamera_depth_depth_is_exclusive

{

ACAMERA_DEPTH_DEPTH_IS_EXCLUSIVE_FALSE = 0, ACAMERA_DEPTH_DEPTH_IS_EXCLUSIVE_TRUE = 1

} |

| enum | acamera_metadata_enum_acamera_distortion_correction_mode

{

ACAMERA_DISTORTION_CORRECTION_MODE_OFF = 0, ACAMERA_DISTORTION_CORRECTION_MODE_FAST = 1, ACAMERA_DISTORTION_CORRECTION_MODE_HIGH_QUALITY = 2

} |

| enum | acamera_metadata_enum_acamera_edge_mode

{

ACAMERA_EDGE_MODE_OFF = 0, ACAMERA_EDGE_MODE_FAST = 1, ACAMERA_EDGE_MODE_HIGH_QUALITY = 2, ACAMERA_EDGE_MODE_ZERO_SHUTTER_LAG = 3

} |

| enum | acamera_metadata_enum_acamera_flash_info_available

{

ACAMERA_FLASH_INFO_AVAILABLE_FALSE = 0, ACAMERA_FLASH_INFO_AVAILABLE_TRUE = 1

} |

| enum | acamera_metadata_enum_acamera_flash_mode

{

ACAMERA_FLASH_MODE_OFF = 0, ACAMERA_FLASH_MODE_SINGLE = 1, ACAMERA_FLASH_MODE_TORCH = 2

} |

| enum | acamera_metadata_enum_acamera_flash_state

{

ACAMERA_FLASH_STATE_UNAVAILABLE = 0, ACAMERA_FLASH_STATE_CHARGING = 1, ACAMERA_FLASH_STATE_READY = 2, ACAMERA_FLASH_STATE_FIRED = 3, ACAMERA_FLASH_STATE_PARTIAL = 4

} |

| enum | acamera_metadata_enum_acamera_heic_available_heic_stream_configurations

{

ACAMERA_HEIC_AVAILABLE_HEIC_STREAM_CONFIGURATIONS_OUTPUT = 0, ACAMERA_HEIC_AVAILABLE_HEIC_STREAM_CONFIGURATIONS_INPUT = 1

} |

| enum | acamera_metadata_enum_acamera_heic_available_heic_stream_configurations_maximum_resolution

{

ACAMERA_HEIC_AVAILABLE_HEIC_STREAM_CONFIGURATIONS_MAXIMUM_RESOLUTION_OUTPUT = 0, ACAMERA_HEIC_AVAILABLE_HEIC_STREAM_CONFIGURATIONS_MAXIMUM_RESOLUTION_INPUT = 1

} |

| enum | acamera_metadata_enum_acamera_hot_pixel_mode

{

ACAMERA_HOT_PIXEL_MODE_OFF = 0, ACAMERA_HOT_PIXEL_MODE_FAST = 1, ACAMERA_HOT_PIXEL_MODE_HIGH_QUALITY = 2

} |

| enum | acamera_metadata_enum_acamera_info_supported_hardware_level

{

ACAMERA_INFO_SUPPORTED_HARDWARE_LEVEL_LIMITED = 0, ACAMERA_INFO_SUPPORTED_HARDWARE_LEVEL_FULL = 1, ACAMERA_INFO_SUPPORTED_HARDWARE_LEVEL_LEGACY = 2, ACAMERA_INFO_SUPPORTED_HARDWARE_LEVEL_3 = 3, ACAMERA_INFO_SUPPORTED_HARDWARE_LEVEL_EXTERNAL = 4

} |

| enum | acamera_metadata_enum_acamera_lens_facing

{

ACAMERA_LENS_FACING_FRONT = 0, ACAMERA_LENS_FACING_BACK = 1, ACAMERA_LENS_FACING_EXTERNAL = 2

} |

| enum | acamera_metadata_enum_acamera_lens_info_focus_distance_calibration

{

ACAMERA_LENS_INFO_FOCUS_DISTANCE_CALIBRATION_UNCALIBRATED = 0, ACAMERA_LENS_INFO_FOCUS_DISTANCE_CALIBRATION_APPROXIMATE = 1, ACAMERA_LENS_INFO_FOCUS_DISTANCE_CALIBRATION_CALIBRATED = 2

} |

| enum | acamera_metadata_enum_acamera_lens_optical_stabilization_mode

{

ACAMERA_LENS_OPTICAL_STABILIZATION_MODE_OFF = 0, ACAMERA_LENS_OPTICAL_STABILIZATION_MODE_ON = 1

} |

| enum | acamera_metadata_enum_acamera_lens_pose_reference

{

ACAMERA_LENS_POSE_REFERENCE_PRIMARY_CAMERA = 0, ACAMERA_LENS_POSE_REFERENCE_GYROSCOPE = 1, ACAMERA_LENS_POSE_REFERENCE_UNDEFINED = 2, ACAMERA_LENS_POSE_REFERENCE_AUTOMOTIVE = 3

} |

| enum | acamera_metadata_enum_acamera_lens_state

{

ACAMERA_LENS_STATE_STATIONARY = 0, ACAMERA_LENS_STATE_MOVING = 1

} |

| enum | acamera_metadata_enum_acamera_logical_multi_camera_sensor_sync_type

{

ACAMERA_LOGICAL_MULTI_CAMERA_SENSOR_SYNC_TYPE_APPROXIMATE = 0, ACAMERA_LOGICAL_MULTI_CAMERA_SENSOR_SYNC_TYPE_CALIBRATED = 1

} |

| enum | acamera_metadata_enum_acamera_noise_reduction_mode

{

ACAMERA_NOISE_REDUCTION_MODE_OFF = 0, ACAMERA_NOISE_REDUCTION_MODE_FAST = 1, ACAMERA_NOISE_REDUCTION_MODE_HIGH_QUALITY = 2, ACAMERA_NOISE_REDUCTION_MODE_MINIMAL = 3, ACAMERA_NOISE_REDUCTION_MODE_ZERO_SHUTTER_LAG = 4

} |

| enum | acamera_metadata_enum_acamera_request_available_capabilities

{

ACAMERA_REQUEST_AVAILABLE_CAPABILITIES_BACKWARD_COMPATIBLE = 0, ACAMERA_REQUEST_AVAILABLE_CAPABILITIES_MANUAL_SENSOR = 1, ACAMERA_REQUEST_AVAILABLE_CAPABILITIES_MANUAL_POST_PROCESSING = 2, ACAMERA_REQUEST_AVAILABLE_CAPABILITIES_RAW = 3, ACAMERA_REQUEST_AVAILABLE_CAPABILITIES_READ_SENSOR_SETTINGS = 5, ACAMERA_REQUEST_AVAILABLE_CAPABILITIES_BURST_CAPTURE = 6, ACAMERA_REQUEST_AVAILABLE_CAPABILITIES_DEPTH_OUTPUT = 8, ACAMERA_REQUEST_AVAILABLE_CAPABILITIES_MOTION_TRACKING = 10, ACAMERA_REQUEST_AVAILABLE_CAPABILITIES_LOGICAL_MULTI_CAMERA = 11, ACAMERA_REQUEST_AVAILABLE_CAPABILITIES_MONOCHROME = 12, ACAMERA_REQUEST_AVAILABLE_CAPABILITIES_SECURE_IMAGE_DATA = 13, ACAMERA_REQUEST_AVAILABLE_CAPABILITIES_SYSTEM_CAMERA = 14, ACAMERA_REQUEST_AVAILABLE_CAPABILITIES_ULTRA_HIGH_RESOLUTION_SENSOR = 16, ACAMERA_REQUEST_AVAILABLE_CAPABILITIES_STREAM_USE_CASE = 19

} |

| enum | acamera_metadata_enum_acamera_request_available_dynamic_range_profiles_map

{

ACAMERA_REQUEST_AVAILABLE_DYNAMIC_RANGE_PROFILES_MAP_STANDARD = 0x1, ACAMERA_REQUEST_AVAILABLE_DYNAMIC_RANGE_PROFILES_MAP_HLG10 = 0x2, ACAMERA_REQUEST_AVAILABLE_DYNAMIC_RANGE_PROFILES_MAP_HDR10 = 0x4, ACAMERA_REQUEST_AVAILABLE_DYNAMIC_RANGE_PROFILES_MAP_HDR10_PLUS = 0x8, ACAMERA_REQUEST_AVAILABLE_DYNAMIC_RANGE_PROFILES_MAP_DOLBY_VISION_10B_HDR_REF = 0x10, ACAMERA_REQUEST_AVAILABLE_DYNAMIC_RANGE_PROFILES_MAP_DOLBY_VISION_10B_HDR_REF_PO = 0x20, ACAMERA_REQUEST_AVAILABLE_DYNAMIC_RANGE_PROFILES_MAP_DOLBY_VISION_10B_HDR_OEM = 0x40, ACAMERA_REQUEST_AVAILABLE_DYNAMIC_RANGE_PROFILES_MAP_DOLBY_VISION_10B_HDR_OEM_PO = 0x80, ACAMERA_REQUEST_AVAILABLE_DYNAMIC_RANGE_PROFILES_MAP_DOLBY_VISION_8B_HDR_REF = 0x100, ACAMERA_REQUEST_AVAILABLE_DYNAMIC_RANGE_PROFILES_MAP_DOLBY_VISION_8B_HDR_REF_PO = 0x200, ACAMERA_REQUEST_AVAILABLE_DYNAMIC_RANGE_PROFILES_MAP_DOLBY_VISION_8B_HDR_OEM = 0x400, ACAMERA_REQUEST_AVAILABLE_DYNAMIC_RANGE_PROFILES_MAP_DOLBY_VISION_8B_HDR_OEM_PO = 0x800, ACAMERA_REQUEST_AVAILABLE_DYNAMIC_RANGE_PROFILES_MAP_MAX = 0x1000

} |

| enum | acamera_metadata_enum_acamera_scaler_available_recommended_stream_configurations

{

ACAMERA_SCALER_AVAILABLE_RECOMMENDED_STREAM_CONFIGURATIONS_PREVIEW = 0x0, ACAMERA_SCALER_AVAILABLE_RECOMMENDED_STREAM_CONFIGURATIONS_RECORD = 0x1, ACAMERA_SCALER_AVAILABLE_RECOMMENDED_STREAM_CONFIGURATIONS_VIDEO_SNAPSHOT = 0x2, ACAMERA_SCALER_AVAILABLE_RECOMMENDED_STREAM_CONFIGURATIONS_SNAPSHOT = 0x3, ACAMERA_SCALER_AVAILABLE_RECOMMENDED_STREAM_CONFIGURATIONS_ZSL = 0x4, ACAMERA_SCALER_AVAILABLE_RECOMMENDED_STREAM_CONFIGURATIONS_RAW = 0x5, ACAMERA_SCALER_AVAILABLE_RECOMMENDED_STREAM_CONFIGURATIONS_LOW_LATENCY_SNAPSHOT = 0x6, ACAMERA_SCALER_AVAILABLE_RECOMMENDED_STREAM_CONFIGURATIONS_PUBLIC_END = 0x7, ACAMERA_SCALER_AVAILABLE_RECOMMENDED_STREAM_CONFIGURATIONS_10BIT_OUTPUT = 0x8, ACAMERA_SCALER_AVAILABLE_RECOMMENDED_STREAM_CONFIGURATIONS_PUBLIC_END_3_8 = 0x9, ACAMERA_SCALER_AVAILABLE_RECOMMENDED_STREAM_CONFIGURATIONS_VENDOR_START = 0x18

} |

| enum | acamera_metadata_enum_acamera_scaler_available_stream_configurations

{

ACAMERA_SCALER_AVAILABLE_STREAM_CONFIGURATIONS_OUTPUT = 0, ACAMERA_SCALER_AVAILABLE_STREAM_CONFIGURATIONS_INPUT = 1

} |

| enum | acamera_metadata_enum_acamera_scaler_available_stream_configurations_maximum_resolution

{

ACAMERA_SCALER_AVAILABLE_STREAM_CONFIGURATIONS_MAXIMUM_RESOLUTION_OUTPUT = 0, ACAMERA_SCALER_AVAILABLE_STREAM_CONFIGURATIONS_MAXIMUM_RESOLUTION_INPUT = 1

} |

| enum | acamera_metadata_enum_acamera_scaler_available_stream_use_cases

{

ACAMERA_SCALER_AVAILABLE_STREAM_USE_CASES_DEFAULT = 0x0, ACAMERA_SCALER_AVAILABLE_STREAM_USE_CASES_PREVIEW = 0x1, ACAMERA_SCALER_AVAILABLE_STREAM_USE_CASES_STILL_CAPTURE = 0x2, ACAMERA_SCALER_AVAILABLE_STREAM_USE_CASES_VIDEO_RECORD = 0x3, ACAMERA_SCALER_AVAILABLE_STREAM_USE_CASES_PREVIEW_VIDEO_STILL = 0x4, ACAMERA_SCALER_AVAILABLE_STREAM_USE_CASES_VIDEO_CALL = 0x5

} |

| enum | acamera_metadata_enum_acamera_scaler_cropping_type

{

ACAMERA_SCALER_CROPPING_TYPE_CENTER_ONLY = 0, ACAMERA_SCALER_CROPPING_TYPE_FREEFORM = 1

} |

| enum | acamera_metadata_enum_acamera_scaler_multi_resolution_stream_supported

{

ACAMERA_SCALER_MULTI_RESOLUTION_STREAM_SUPPORTED_FALSE = 0, ACAMERA_SCALER_MULTI_RESOLUTION_STREAM_SUPPORTED_TRUE = 1

} |

| enum | acamera_metadata_enum_acamera_scaler_physical_camera_multi_resolution_stream_configurations

{

ACAMERA_SCALER_PHYSICAL_CAMERA_MULTI_RESOLUTION_STREAM_CONFIGURATIONS_OUTPUT = 0, ACAMERA_SCALER_PHYSICAL_CAMERA_MULTI_RESOLUTION_STREAM_CONFIGURATIONS_INPUT = 1

} |

| enum | acamera_metadata_enum_acamera_scaler_rotate_and_crop

{

ACAMERA_SCALER_ROTATE_AND_CROP_NONE = 0, ACAMERA_SCALER_ROTATE_AND_CROP_90 = 1, ACAMERA_SCALER_ROTATE_AND_CROP_180 = 2, ACAMERA_SCALER_ROTATE_AND_CROP_270 = 3, ACAMERA_SCALER_ROTATE_AND_CROP_AUTO = 4

} |

| enum | acamera_metadata_enum_acamera_sensor_info_color_filter_arrangement

{

ACAMERA_SENSOR_INFO_COLOR_FILTER_ARRANGEMENT_RGGB = 0, ACAMERA_SENSOR_INFO_COLOR_FILTER_ARRANGEMENT_GRBG = 1, ACAMERA_SENSOR_INFO_COLOR_FILTER_ARRANGEMENT_GBRG = 2, ACAMERA_SENSOR_INFO_COLOR_FILTER_ARRANGEMENT_BGGR = 3, ACAMERA_SENSOR_INFO_COLOR_FILTER_ARRANGEMENT_RGB = 4, ACAMERA_SENSOR_INFO_COLOR_FILTER_ARRANGEMENT_MONO = 5, ACAMERA_SENSOR_INFO_COLOR_FILTER_ARRANGEMENT_NIR = 6

} |

| enum | acamera_metadata_enum_acamera_sensor_info_lens_shading_applied

{

ACAMERA_SENSOR_INFO_LENS_SHADING_APPLIED_FALSE = 0, ACAMERA_SENSOR_INFO_LENS_SHADING_APPLIED_TRUE = 1

} |

| enum | acamera_metadata_enum_acamera_sensor_info_timestamp_source

{

ACAMERA_SENSOR_INFO_TIMESTAMP_SOURCE_UNKNOWN = 0, ACAMERA_SENSOR_INFO_TIMESTAMP_SOURCE_REALTIME = 1

} |

| enum | acamera_metadata_enum_acamera_sensor_pixel_mode

{

ACAMERA_SENSOR_PIXEL_MODE_DEFAULT = 0, ACAMERA_SENSOR_PIXEL_MODE_MAXIMUM_RESOLUTION = 1

} |

| enum | acamera_metadata_enum_acamera_sensor_raw_binning_factor_used

{

ACAMERA_SENSOR_RAW_BINNING_FACTOR_USED_TRUE = 0, ACAMERA_SENSOR_RAW_BINNING_FACTOR_USED_FALSE = 1

} |

| enum | acamera_metadata_enum_acamera_sensor_reference_illuminant1

{

ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_DAYLIGHT = 1, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_FLUORESCENT = 2, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_TUNGSTEN = 3, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_FLASH = 4, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_FINE_WEATHER = 9, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_CLOUDY_WEATHER = 10, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_SHADE = 11, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_DAYLIGHT_FLUORESCENT = 12, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_DAY_WHITE_FLUORESCENT = 13, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_COOL_WHITE_FLUORESCENT = 14, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_WHITE_FLUORESCENT = 15, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_STANDARD_A = 17, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_STANDARD_B = 18, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_STANDARD_C = 19, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_D55 = 20, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_D65 = 21, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_D75 = 22, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_D50 = 23, ACAMERA_SENSOR_REFERENCE_ILLUMINANT1_ISO_STUDIO_TUNGSTEN = 24

} |

| enum | acamera_metadata_enum_acamera_sensor_test_pattern_mode

{

ACAMERA_SENSOR_TEST_PATTERN_MODE_OFF = 0, ACAMERA_SENSOR_TEST_PATTERN_MODE_SOLID_COLOR = 1, ACAMERA_SENSOR_TEST_PATTERN_MODE_COLOR_BARS = 2, ACAMERA_SENSOR_TEST_PATTERN_MODE_COLOR_BARS_FADE_TO_GRAY = 3, ACAMERA_SENSOR_TEST_PATTERN_MODE_PN9 = 4, ACAMERA_SENSOR_TEST_PATTERN_MODE_CUSTOM1 = 256

} |

| enum | acamera_metadata_enum_acamera_shading_mode

{

ACAMERA_SHADING_MODE_OFF = 0, ACAMERA_SHADING_MODE_FAST = 1, ACAMERA_SHADING_MODE_HIGH_QUALITY = 2

} |

| enum | acamera_metadata_enum_acamera_statistics_face_detect_mode

{

ACAMERA_STATISTICS_FACE_DETECT_MODE_OFF = 0, ACAMERA_STATISTICS_FACE_DETECT_MODE_SIMPLE = 1, ACAMERA_STATISTICS_FACE_DETECT_MODE_FULL = 2

} |

| enum | acamera_metadata_enum_acamera_statistics_hot_pixel_map_mode

{

ACAMERA_STATISTICS_HOT_PIXEL_MAP_MODE_OFF = 0, ACAMERA_STATISTICS_HOT_PIXEL_MAP_MODE_ON = 1

} |

| enum | acamera_metadata_enum_acamera_statistics_lens_shading_map_mode

{

ACAMERA_STATISTICS_LENS_SHADING_MAP_MODE_OFF = 0, ACAMERA_STATISTICS_LENS_SHADING_MAP_MODE_ON = 1

} |

| enum | acamera_metadata_enum_acamera_statistics_ois_data_mode

{

ACAMERA_STATISTICS_OIS_DATA_MODE_OFF = 0, ACAMERA_STATISTICS_OIS_DATA_MODE_ON = 1

} |

| enum | acamera_metadata_enum_acamera_statistics_scene_flicker

{

ACAMERA_STATISTICS_SCENE_FLICKER_NONE = 0, ACAMERA_STATISTICS_SCENE_FLICKER_50HZ = 1, ACAMERA_STATISTICS_SCENE_FLICKER_60HZ = 2

} |

| enum | acamera_metadata_enum_acamera_sync_frame_number

{

ACAMERA_SYNC_FRAME_NUMBER_CONVERGING = -1, ACAMERA_SYNC_FRAME_NUMBER_UNKNOWN = -2

} |

| enum | acamera_metadata_enum_acamera_sync_max_latency

{

ACAMERA_SYNC_MAX_LATENCY_PER_FRAME_CONTROL = 0, ACAMERA_SYNC_MAX_LATENCY_UNKNOWN = -1

} |

| enum | acamera_metadata_enum_acamera_tonemap_mode

{

ACAMERA_TONEMAP_MODE_CONTRAST_CURVE = 0, ACAMERA_TONEMAP_MODE_FAST = 1, ACAMERA_TONEMAP_MODE_HIGH_QUALITY = 2, ACAMERA_TONEMAP_MODE_GAMMA_VALUE = 3, ACAMERA_TONEMAP_MODE_PRESET_CURVE = 4

} |

| enum | acamera_metadata_enum_acamera_tonemap_preset_curve

{

ACAMERA_TONEMAP_PRESET_CURVE_SRGB = 0, ACAMERA_TONEMAP_PRESET_CURVE_REC709 = 1

} |

| enum | acamera_metadata_section

{

ACAMERA_COLOR_CORRECTION, ACAMERA_CONTROL, ACAMERA_DEMOSAIC, ACAMERA_EDGE, ACAMERA_FLASH, ACAMERA_FLASH_INFO, ACAMERA_HOT_PIXEL, ACAMERA_JPEG, ACAMERA_LENS, ACAMERA_LENS_INFO, ACAMERA_NOISE_REDUCTION, ACAMERA_QUIRKS, ACAMERA_REQUEST, ACAMERA_SCALER, ACAMERA_SENSOR, ACAMERA_SENSOR_INFO, ACAMERA_SHADING, ACAMERA_STATISTICS, ACAMERA_STATISTICS_INFO, ACAMERA_TONEMAP, ACAMERA_LED, ACAMERA_INFO, ACAMERA_BLACK_LEVEL, ACAMERA_SYNC, ACAMERA_REPROCESS, ACAMERA_DEPTH, ACAMERA_LOGICAL_MULTI_CAMERA, ACAMERA_DISTORTION_CORRECTION, ACAMERA_HEIC, ACAMERA_HEIC_INFO, ACAMERA_AUTOMOTIVE, ACAMERA_AUTOMOTIVE_LENS, ACAMERA_SECTION_COUNT, ACAMERA_VENDOR = 0x8000

} |

| enum | acamera_metadata_section_start

{

ACAMERA_COLOR_CORRECTION_START = acamera_metadata_section.ACAMERA_COLOR_CORRECTION << 16, ACAMERA_CONTROL_START = acamera_metadata_section.ACAMERA_CONTROL << 16, ACAMERA_DEMOSAIC_START = acamera_metadata_section.ACAMERA_DEMOSAIC << 16, ACAMERA_EDGE_START = acamera_metadata_section.ACAMERA_EDGE << 16, ACAMERA_FLASH_START = acamera_metadata_section.ACAMERA_FLASH << 16, ACAMERA_FLASH_INFO_START = acamera_metadata_section.ACAMERA_FLASH_INFO << 16, ACAMERA_HOT_PIXEL_START = acamera_metadata_section.ACAMERA_HOT_PIXEL << 16, ACAMERA_JPEG_START = acamera_metadata_section.ACAMERA_JPEG << 16, ACAMERA_LENS_START = acamera_metadata_section.ACAMERA_LENS << 16, ACAMERA_LENS_INFO_START = acamera_metadata_section.ACAMERA_LENS_INFO << 16, ACAMERA_NOISE_REDUCTION_START = acamera_metadata_section.ACAMERA_NOISE_REDUCTION << 16, ACAMERA_QUIRKS_START = acamera_metadata_section.ACAMERA_QUIRKS << 16, ACAMERA_REQUEST_START = acamera_metadata_section.ACAMERA_REQUEST << 16, ACAMERA_SCALER_START = acamera_metadata_section.ACAMERA_SCALER << 16, ACAMERA_SENSOR_START = acamera_metadata_section.ACAMERA_SENSOR << 16, ACAMERA_SENSOR_INFO_START = acamera_metadata_section.ACAMERA_SENSOR_INFO << 16, ACAMERA_SHADING_START = acamera_metadata_section.ACAMERA_SHADING << 16, ACAMERA_STATISTICS_START = acamera_metadata_section.ACAMERA_STATISTICS << 16, ACAMERA_STATISTICS_INFO_START = acamera_metadata_section.ACAMERA_STATISTICS_INFO << 16, ACAMERA_TONEMAP_START = acamera_metadata_section.ACAMERA_TONEMAP << 16, ACAMERA_LED_START = acamera_metadata_section.ACAMERA_LED << 16, ACAMERA_INFO_START = acamera_metadata_section.ACAMERA_INFO << 16, ACAMERA_BLACK_LEVEL_START = acamera_metadata_section.ACAMERA_BLACK_LEVEL << 16, ACAMERA_SYNC_START = acamera_metadata_section.ACAMERA_SYNC << 16, ACAMERA_REPROCESS_START = acamera_metadata_section.ACAMERA_REPROCESS << 16, ACAMERA_DEPTH_START = acamera_metadata_section.ACAMERA_DEPTH << 16, ACAMERA_LOGICAL_MULTI_CAMERA_START = acamera_metadata_section.ACAMERA_LOGICAL_MULTI_CAMERA

<< 16, ACAMERA_DISTORTION_CORRECTION_START = acamera_metadata_section.ACAMERA_DISTORTION_CORRECTION

<< 16, ACAMERA_HEIC_START = acamera_metadata_section.ACAMERA_HEIC << 16, ACAMERA_HEIC_INFO_START = acamera_metadata_section.ACAMERA_HEIC_INFO << 16, ACAMERA_AUTOMOTIVE_START = acamera_metadata_section.ACAMERA_AUTOMOTIVE << 16, ACAMERA_AUTOMOTIVE_LENS_START = acamera_metadata_section.ACAMERA_AUTOMOTIVE_LENS << 16, ACAMERA_VENDOR_START = acamera_metadata_section.ACAMERA_VENDOR << 16

} |

Enums Documentation

Tags

| Enumerator | Value | Description |

|---|---|---|

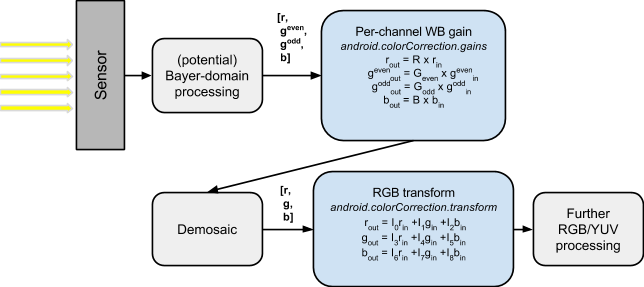

| ACAMERA_COLOR_CORRECTION_MODE | ||

| acamera_metadata_section_start.ACAMERA_COLOR_CORRECTION_START | The mode control selects how the image data is converted from the sensor's native color into linear sRGB color. |